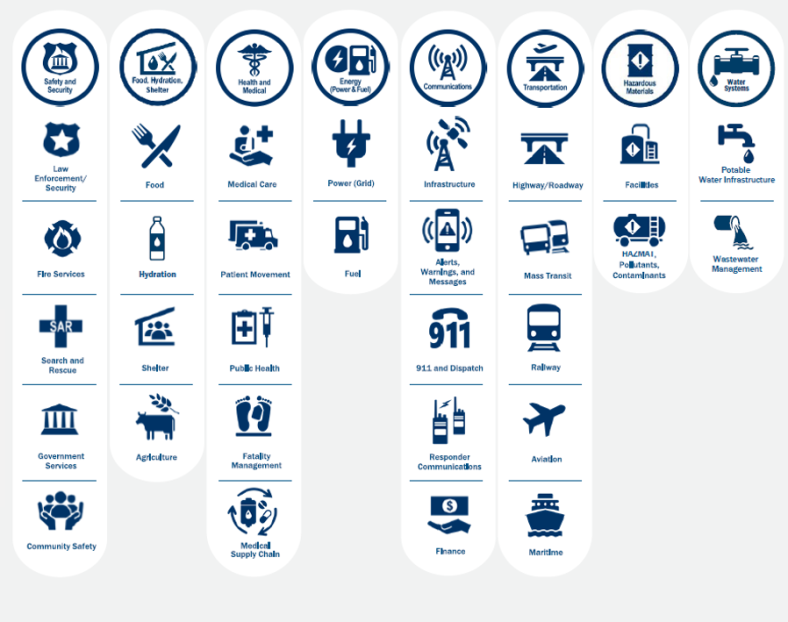

The annual National Preparedness Report (NPR) is a requirement of Presidential Policy Directive 8, which states that the NPR is based on the National Preparedness Goal. The National Preparedness Goal, per the FEMA website, is “A secure and resilient nation with the capabilities required across the whole community to prevent, protect against, mitigate, respond to, and recover from the threats and hazards that pose the greatest risk.” The capabilities indicated in the National Preparedness Goal are specifically the 32 Core Capabilities.

The 2024 NPR is developed to reflect data and information from 2023. As with previous NPRs, I have a lot of concern about the ultimate value of the document. While I’m sure a lot of time, effort, and money was spent gathering an abundance of data from across the nation to support this report, this year’s report, following the unfortunate trend of its predecessors, doesn’t seem to be worth the investment. As with the others, this report falls short on adequate scope, information, and recommendations. Certainly, there is a challenge to be acknowledged of not only gathering a massive quantity of information from across the country but also examining and reporting this information in aggregate, as most federal reports are burdened to do. That said, I see little excuse to not provide a meaningful report.

In this year’s report, following the introductory materials, is a section on risks, which is largely a reflection of the high impact disasters of 2023 seen across the US; the most challenging threats and hazards; and the intersections of risk and vulnerability. All in all, this is an adequate snapshot of these topics in summary, with some solid points and a level of analysis that I would expect through the rest of the document, which includes trends over time, and identification of factors which influence the findings. There are several maps and charts which provide good data visualization and several mentions of bridging data between agencies such as FEMA, NOAA, and CDC. A good start.

The next section is Capabilities. This section has two areas of narrative – community preparedness and individual and household preparedness. Given the significant efforts to bolster capabilities throughout the federal government and in state, tribal, and territorial governments, it seems these levels are obviously missing if we are to suggest that all local governments are simply communities, so I’m not sure why this is specifically titled community preparedness. Does it not include the efforts of states or others? Page 18 of the report provides a chart similar to what we’ve seen in previous reports which shows how much money was spent on each capability (in communities… again, what does this include or exclude?) for 2023. The chart also indicates the percentage of communities achieving their capability targets.

As with the reports from the previous year, I ask: So what? This is a snapshot in time and lacking context. A trend analysis accounting for at least the past several years would be quite insightful, as would some description of what the funds within each capability were primarily spent on – broadly planning, organizing, equipping, training, and exercises, but I’d like to see even more specifics. There are a few random examples in the narrative, but a lot is still lacking. I’d also like to see some analysis of relative success or value of these investments. In regard specifically to the percentage of communities who feel they have achieved their capability target, I have to eye roll a bit at this, as this is often the most subjective (and sometimes smoke and mirrors) aspect of the Threat and Hazard Identification and Risk Assessment (THIRA). This chart currently has little value other than a ‘gee whiz’ factor of seeing how much money is spent on each capability.

I’ll also include a specific observation of mine here: the Core Capability of Mass Care Services, which in the previous year’s report was indicated as a high-priority capability, continues a trend (I’m only aware of the trend from looking back at previous reports since trend data is not included in the report) of having one of the lowest achievement percentages and investments. I’m hopeful that’s why it’s included in the next section as a focus area.

The other area of narrative in the Capabilities section is individual and household preparedness. All in all, the information presented here is fine and even includes a slight bit of trend analysis, though in 2023 a much more comprehensive reporting of this information was provided under separate cover. I think an improved version of something like the 2023 report should be incorporated into the NPR.

The next section of the NPR is Focus Areas, which includes the Core Capabilities of Mass Care Services, Public Information and Warning, Infrastructure Systems, and Cybersecurity. Each focus area includes narrative on risk, capabilities and gaps, and management opportunities – which all provide great information. There is a brief mention of how these focus areas were selected. While I’m fine with having a deeper analysis of certain focus areas, I think the NPR should still provide a comprehensive review of all Core Capabilities.

While the management opportunities listed for each of the four focus areas are essentially recommendations, the report itself only provides two recommendations which are labeled as such. These recommendations are identified in the document’s introduction with a bit of narrative (and the conclusion with none), that thankfully provides some suggestions for actionable implementation, but I was left feeling both surprised and disappointed that the National Preparedness Report, which really should be providing an analysis of all 32 Core Capabilities which serve as the foundation for nation’s preparedness goal, has only two recommendations for improving our preparedness. Two. That’s it. There should be an abundance of recommendations. This is the information that emergency managers and decision-makers within the field of practice need within federal agencies and state, local, tribal, and territorial (SLTT) governments. Another missed opportunity to provide value.

The 2024 NPR is extremely similar to the past several years in format and general content, and as such I’m not surprised by the lack of value. I continue to stand by my statement across these past several years in regard to this report: the emergency management community should not be accepting this type of reporting. While I recognize that through PPD8, it is defined that the audience for this report is the President and the Secretary of Homeland Security, the utility of such a report can and should have a much broader reach across all of emergency management, and idealistically to tax payers as well, who should be able to access better information on how their tax dollars are spent within preparedness – which impacts everyone. States, UASIs, and other entities who submit information annually for this report should also be disappointed that this is what is published about their hard work, and the emergency management membership organizations should also be demanding better. This report has the potential to be meaningful, insightful, and influential, yet FEMA misses the opportunity every single year to do so. The data exists, and the stories of the activities, accomplishments, and gaps can all be told. With the application of some reasonable analysis and recommendations, the document could be much more impactful.

It’s been said by many that emergency managers are notorious for not marketing well, and this document is proof positive of that. Those of us working in this profession know there is so much more to be examined and described that can tell of not only what we have accomplished but also of the work to be done. We find ourselves in a time where the purpose and value of FEMA is being questioned by a number of people; a time where some inefficiencies, missteps, and even failures are being put under a very critical microscope and seemingly being used to fuel a suggestion of eliminating FEMA. Greater efficiencies can certainly be identified and gaps addressed, but our reluctance to tell the stories of what we do clearly lend to misunderstandings and a severe lack of awareness that exist about our field of practice – one in which there is no organization of greater prominence and importance than FEMA. While the NPR is not at fault for these shortcomings, it is a contributor. When reports like this miss opportunities to do more and be more year over year, that snowballs and becomes a much greater issue. We need to do better.

© 2015 – Timothy Riecker, CEDP